HIPAA violations carry severe financial penalties that can cripple healthcare startups overnight. Yet testing coverage remains inadequate across the industry. Critical security gaps go undetected until production. The paradox: regulatory compliance demands exhaustive testing, but traditional QA team scaling takes months and strains already-tight budgets.

I spent three years watching healthcare technology teams manually execute the same test scenarios while their competitors shipped features faster. The hiring model itself creates the bottleneck. It no longer matches the velocity healthcare technology demands in 2025.

Recent industry research shows 35% of QA teams rank increasing test coverage as their number one priority. Despite this urgency, most organizations struggle to scale quality assurance without proportional headcount growth.

Where Traditional Testing Budgets Actually Go

QA resources disappear into activities most teams don’t track. Tool subscriptions and salaries appear in budgets. The real drains consume capacity invisibly.

Time Allocation in Practice:

| Activity | Manual Testing Focus | AI-Assisted Focus |

| Regression testing | Primary time investment | Minimal oversight |

| Test maintenance | Constant burden | Significantly reduced |

| HIPAA compliance checks | Manual verification | Automated validation |

| Exploratory testing | Limited capacity | Primary focus area |

Traditional scaling reveals uncomfortable mathematics. Hiring experienced QA engineers takes substantial time. Additional months pass during onboarding before productivity increases materialize. During this window, competitors ship multiple feature releases while you’re still conducting interviews.

False positives create another invisible cost drain. Test scripts break when interface elements shift position. Engineers waste hours investigating non-issues. Teams spend significant QA capacity managing flaky tests that provide zero actual value.

Industry data shows 58% of teams report defects slipping into production due to rapid release pressures. The traditional manual testing model cannot keep pace with modern development velocity.

What Changed in 2024-2025

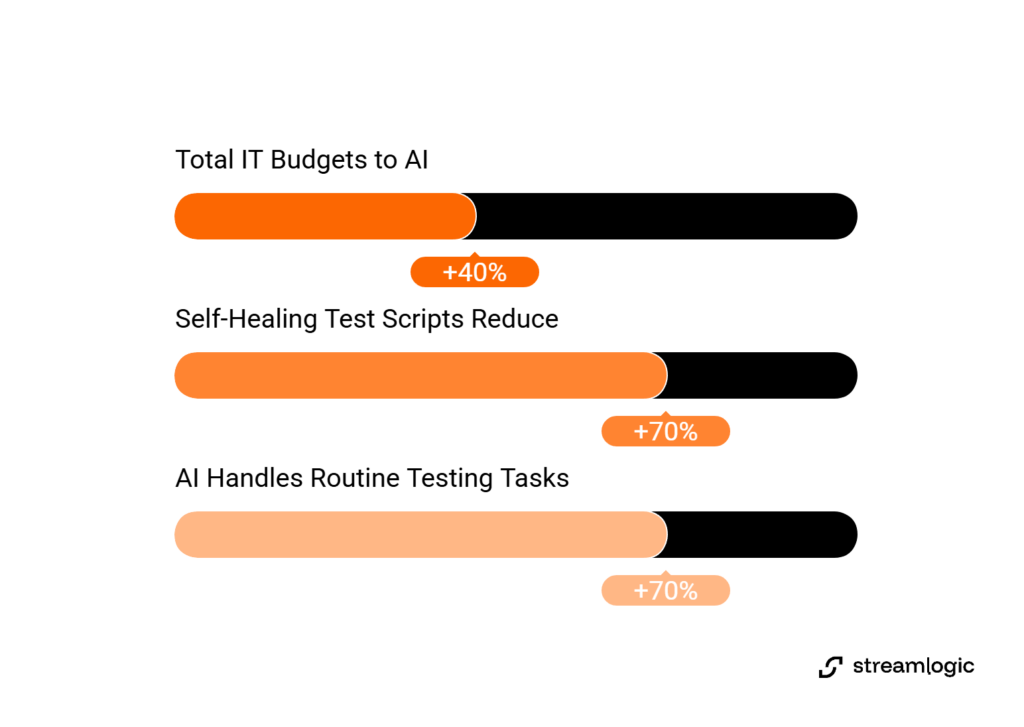

AI testing adoption accelerated dramatically across software companies from 2023 to 2025. Value for mHealth apps varies significantly depending on which capabilities you prioritize. According to IDC research, organizations now allocate approximately 40% of their total IT budgets to various AI testing applications. This reflects how central the technology has become.

Self-healing test scripts reduce maintenance overhead by up to 70%. They automatically adapt to minor UI changes like CSS updates or element position shifts. Visual validation becomes critical for mHealth app development. Healthcare interfaces demand pixel-perfect accuracy for medication dosing screens and patient data displays. Autonomous test generation helps discover edge cases that human testers might miss, particularly in complex clinical workflows.

Marketing promises diverge from engineering reality here. “Self-healing” fails completely when workflows change or new features launch. Visual validation works brilliantly for layout verification. It cannot assess whether clinical decision support algorithms recommend correct treatment protocols.

Healthcare QA teams cannot simply replace human expertise with tools. Research shows AI-driven automation can handle up to 70% of routine testing tasks. Successful implementations preserve human judgment for compliance decisions and clinical workflow validation.

Manual to Automated: How Teams Scale Testing

Implementation data from healthcare teams reveals patterns vendors don’t discuss. Companies that successfully scale testing share common approaches. Typical starting point: small QA teams with minimal automation coverage facing lengthy regression cycles.

The shift requires specific team composition choices rather than just tool purchases. Successful teams combine QA automation engineers with ML-aware practitioners. These practitioners understand both testing frameworks and AI system behavior. Tool selection varies. Most implementations use specialized platforms for web and mobile app automation testing across iOS and Android devices.

Smart workflow design runs automated tests parallel to manual QA during transition periods. This validation phase catches integration issues before full commitment to new approaches. Industry patterns show teams reaching 80% test coverage represents the optimal target. Research confirms that getting to 80% end-to-end test coverage prevents bugs from reaching production while maintaining realistic automation goals. Pursuing 100% automation proves both impossible and counterproductive.

Implementation mistakes surface within weeks in production. Initial tool choices sometimes prove inadequate. Teams switch platforms within the first month. Over-reliance on vendor promises about “autonomous” capabilities creates false confidence. This leads to missed defects. The critical pattern: team composition and healthcare domain expertise determine success more than which specific tools you select.

HIPAA Compliance Testing Under AI

Compliance creates unique constraints for mHealth app developers. Generic testing tools ignore these requirements. Handling protected health information in automated test environments requires synthetic data. This data must mimic production complexity without exposing real patient records. Every AI-generated test needs audit trail documentation. The documentation must show which scenarios were validated and when.

Several compliance gaps remain unsolved by current automation technology. State-specific consent flow variations require human review because regulations differ across jurisdictions. Clinical workflow context decisions need medical domain knowledge. AI cannot reliably provide this knowledge. Multi-step authorization edge cases involve complex patient privacy rules. Automated systems frequently misinterpret these rules.

Meditech mHealth app teams still need healthcare domain expertise despite advanced automation capabilities. AI handles the mechanical work of executing thousands of test scenarios. Human judgment determines whether those scenarios actually validate regulatory requirements.

Successful teams implement hybrid models.AI automation handles repetitive regression testing. Experienced QA engineers focus on compliance validation and clinical workflow verification. This division of labor maximizes both coverage and regulatory confidence.

Teams vs. Hiring

Two fundamental approaches exist for scaling quality assurance. Each carries distinct tradeoffs.

Traditional Hiring Approach:

- Expanding QA teams through recruitment

- Extended timelines for sourcing qualified candidates

- Additional onboarding periods before productivity

- Substantial ongoing salary and benefits costs

- Difficulty finding healthcare domain expertise

AI-Assisted Team Structure:

- Smaller specialized team with automation tools

- Faster assembly with right partnerships

- Reduced time to measurable impact

- Tools costs offset by headcount efficiency

- Focus on high-value manual testing

Industry research reveals an important ratio: expect approximately one QA engineer for every three developers to maintain adequate coverage. This benchmark helps size teams appropriately whether scaling through hiring or automation.

Small applications with minimal critical user flows lack sufficient complexity to justify automation investment. Very small teams cannot properly maintain AI testing infrastructure. Legacy systems with minimal change velocity see negligible returns because regression testing frequency stays low.

Implementation Without Disruption

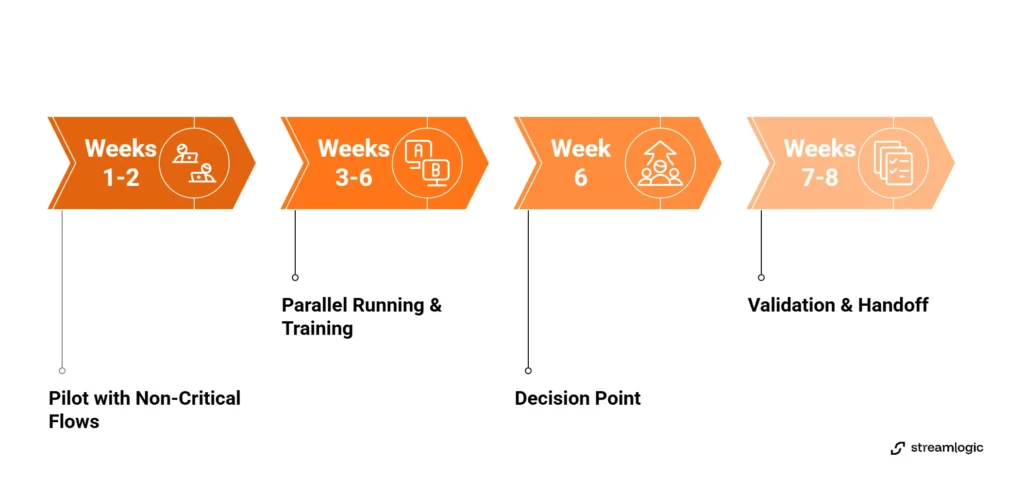

Healthcare technology teams that successfully introduce automation without disrupting development cycles follow predictable patterns.

Weeks 1-2: Pilot with Non-Critical Flows

Select low-risk user journeys like account login or basic profile updates. Set up test environments using synthetic patient data that mirrors production complexity. This validation period determines tool fit before larger commitment.

Weeks 3-6: Parallel Running

Run automated tests alongside existing manual QA processes. Train team members on test maintenance procedures during this period. Measure stability metrics and coverage increases week over week. Week six becomes the decision point. Proceed with current tools or pivot to different platforms.

Weeks 7-8: Validation Gates and Handoff

Complete audit trail documentation for HIPAA compliance requirements. Integrate testing into CI/CD pipelines so every code commit triggers automated validation. Define sustained operations processes. Establish who handles test failures and maintenance windows.

Red flags signal wrong tool selection: excessive false positive rates, team resistance due to complexity, vendors over-promising “autonomous” capabilities that require constant manual intervention. Hidden costs in execution pricing can destroy ROI projections if not identified during pilot phases.

For teams serious about custom mHealth app development, partnering with experienced mHealth app development company resources accelerates this timeline significantly. Partners help teams avoid common implementation mistakes.

What QA Teams Actually Need to Know

Skill gaps determine success more than tool selection. Some capabilities can be trained internally within reasonable timeframes. These include basic test automation frameworks, CI/CD integration concepts, and synthetic test data generation techniques. Other skills require hiring or contracting because training takes too long.

Healthcare domain QA expertise takes years to develop. This particularly applies to HIPAA and FDA compliance knowledge. ML and AI testing for algorithm validation requires both quality assurance and machine learning backgrounds. Mobile device integration patterns across iOS and Android ecosystems involve platform-specific expertise. HL7 and FHIR integration experience matters for healthcare interoperability testing.

Integration with existing CI/CD pipelines typically requires several weeks for proper setup. Budget additional time for false positive tuning. Initial configurations often generate noise. Plan maintenance windows for updating test scenarios as application features evolve.

Three vendor questions expose weak tools immediately. First, ask about self-healing false positive rates. Good vendors answer with specific metrics and concrete data. Bad vendors offer vague responses about systems “learning over time” without measurable outcomes. Second, request demonstration of HIPAA audit trail capabilities. Strong platforms provide built-in compliance reporting. Weak solutions require manual log exports. Third, inquire about median customer time-to-value. Best-in-class vendors achieve measurable ROI within reasonable timeframes. Vendors requiring extended implementation periods signal complexity that erodes value.

Teams exploring mobile app automation testing should evaluate vendors against these specific criteria. General feature checklists obscure what actually matters in production.

The Uncomfortable Truth About “Autonomous” Testing

Marketing materials promise fully autonomous testing that requires zero human intervention. Production environments tell a different story. Industry observations show 70-80% automated coverage represents the optimal target for most mHealth apps. Pursuing 100% automation that vendors suggest is achievable proves both wasteful and dangerous.

The remaining 20-30% requires human judgment for critical areas. Exploratory testing of new features cannot be automated. Test scenarios don’t yet exist. Complex compliance scenarios need healthcare domain knowledge to interpret state-specific regulations. UX and usability evaluation demands subjective assessment. AI cannot reliably provide this assessment.

Human-in-the-loop scenarios that still matter include multi-step clinical workflows requiring medical logic understanding, edge cases with healthcare domain context, and compliance decisions based on jurisdiction-specific regulations. First-time feature testing must occur manually before automation patterns can be established.

Over-automation of rarely-used flows generates maintenance burden without corresponding value. False confidence in “autonomous” capabilities leads to missed defects. Neglecting team training on how AI tools actually work creates dependency without understanding. Replacing skilled QA judgment with blind tool reliance undermines quality.

Research confirms a specific pattern with proper implementation. QA teams can automate up to 70% of routine tasks. They must preserve human expertise for high-value work. This becomes especially critical when building product development teams for healthcare applications where compliance stakes remain high.

FAQ

Can AI testing tools really replace manual QA engineers?

AI automation serves as assistance. Industry patterns show optimal approaches combine 70-80% automated coverage with 20-30% strategic manual testing. Experienced QA engineers remain essential for several tasks. They configure and maintain AI tools. They handle exploratory testing for new features. They make compliance judgment calls that require healthcare domain knowledge. They validate that AI-generated tests don’t create false confidence. AI lets your QA team focus on high-value work instead of repetitive regression testing.

What’s the biggest mistake companies make when implementing AI testing?

Companies treat it as a tool problem instead of a team problem. They purchase AI automation platforms thinking tools will “solve” their QA bottleneck. Then they wonder why delivery doesn’t match expectations. What actually happens: tools sit unused because no one configured them properly. Teams lack ML and testing hybrid skills for maintenance. Missing healthcare domain expertise means wrong scenarios get automated. Over-reliance on “autonomous” features that aren’t truly autonomous causes problems. The success pattern: start with team composition and right skills mix, then select tools that fit your team’s capabilities.

Is AI testing HIPAA compliant? How do we handle PHI in automated tests?

Your implementation determines HIPAA compliance. The AI testing platform itself does not determine this. Critical requirements include PHI handling using synthetic test data only, never real patient information. Test environments must be properly segmented. Data masking applies to production-like data. Audit trail documentation covers all AI-generated test cases for compliance reviews. This includes logs of who approved automated scenarios and tracking of coverage changes over time. Vendor due diligence verifies SOC2 and HIPAA BAA capabilities. Understand where test data is stored. Review security practices for tool access. Most enterprise platforms have HIPAA-ready options. You must configure them correctly. Healthcare domain expertise matters on your QA team.

Should we build AI testing capability in-house or partner with a specialized team?

Several factors determine the right approach. Build in-house if you have extended timeline to develop capability, budget for larger dedicated teams, existing strong QA leadership with ML and AI experience, and stable product roadmap. Partner or augment if you need faster results, lack healthcare QA domain expertise internally, want to validate approach before large commitment, or face compliance deadline or competitive pressure.

Hybrid approaches prove most common in practice. These use core in-house QA engineers for product knowledge. They access specialized skills like ML testing and healthcare compliance via contractors. Teams scale capacity based on release cycles. Finding QA engineers with both automation skills and healthcare domain knowledge proves difficult. This explains why many companies choose partnership rather than extended hiring cycles.

Building vs. Hiring: A Strategic Choice

Finding specialized skills quickly creates the bottleneck. Tool selection matters less. Your timeline to impact determines which path makes sense. Traditional hiring requires extended periods to reach full productivity. Partnering for specialized teams delivers impact faster. Hybrid approaches combine core in-house resources with specialized contractors.

Decision-makers should start with honest assessment of current test coverage. Many teams don’t actually know their baseline metrics. Calculate the opportunity cost of extended hiring cycles versus team partnership options. Run short pilot projects to validate approaches before full commitment.

Healthcare technology development cycles keep accelerating. Teams that successfully scale quality assurance without proportional headcount growth win competitive advantages. These advantages compound over time. Most teams now ask how quickly they can implement AI automation rather than whether to adopt it.

If you’re ready to explore what AI-assisted testing could mean for your mHealth app, book a session with our CTO Denis Abramenko for an honest evaluation of your current situation and practical next steps.